Key Takeaways

Quality-based healthcare programs reduce readmissions by 20-30% in well-run clinics

CQC and NICE frameworks provide structured implementation pathways for UK practices

Staff engagement determines 60-70% of quality program success rates

Patient-centred metrics outperform purely clinical indicators in long-term outcomes

North Dakota has one psychologist per 4,900 citizens. The UK’s NHS faces 7.6 million patients on waiting lists. Australia reports GP vacancy rates exceeding 15% in rural areas. These aren’t statistics about access alone – they’re signals that quality measurement has decoupled from delivery capacity.

Leading with quality-based healthcare programs means building systems where clinical excellence and operational reality align. Not aspiration statements. Not compliance theatre. Actual workflows that measure what matters, train teams to own outcomes, and close the gap between intention and execution.

This guide covers implementation frameworks, staff engagement models, and measurement approaches grounded in how clinics actually operate. It’s written for practice owners, clinical directors, and operations managers evaluating how to move from quality ambitions to embedded practice.

Leading With Quality Based Health Care Programs: What Actually Drives Success

Quality-based healthcare programs fail most often at the implementation layer, not the strategy layer. A 2023 NICE analysis of primary care quality initiatives found that 68% of practices abandoned structured improvement projects within 18 months. The reason wasn’t lack of clinical buy-in. It was operational friction – data systems that didn’t talk to each other, reporting burdens that doubled admin time, and metrics disconnected from daily workflow.

Effective programs start with three operational realities. First, quality measurement must integrate into existing clinical documentation, not sit alongside it. A dermatology clinic tracking treatment outcomes can’t sustain two parallel recording systems. Second, staff must see metric improvements reflected in their own work experience – shorter patient waits, clearer handovers, fewer repeat calls. If quality only benefits executive dashboards, frontline disengagement follows. Third, technology infrastructure determines measurement feasibility more than clinical protocols do.

The CQC’s 2024 inspection framework weights “well-led” domains at 25% of overall ratings. That’s not administrative preference. It’s recognition that compliance management systems and leadership structure predict quality outcomes more reliably than isolated clinical interventions. A mental health practice with excellent therapeutic protocols but fragmented record-keeping will underperform a moderately skilled team with disciplined data governance.

The distinction matters because most quality discussions focus on what to measure rather than how to sustain measurement. Implementation requires workflow redesign, not just target setting. That means deciding which metrics integrate naturally into clinical touchpoints (patient satisfaction surveys at checkout, safety incident logging in EMR templates) versus which require dedicated time allocation.

Building Quality Frameworks That Clinics Can Sustain

Sustainability splits on whether quality work feels like added burden or improved process. A physiotherapy clinic implementing post-treatment outcome tracking fails when therapists must log into a separate portal after each session. It succeeds when outcome questions auto-populate in the digital forms patients already complete during follow-up booking.

Four structural elements separate sustainable frameworks from abandoned initiatives. First, data capture must happen at the point of care, not retrospectively. Multi-location medical spas tracking complication rates need incident reporting built into post-procedure check-in workflows, not monthly manual audits. Second, reporting must serve frontline staff before management. When therapists can see their own patient satisfaction trends versus clinic averages, engagement increases 40-50% compared to top-down reporting only.

Third, quality metrics should align with financial sustainability. A weight-loss clinic measuring long-term patient outcomes (12-month weight maintenance, metabolic marker improvement) creates natural retention hooks that support revenue. Measuring only immediate satisfaction or appointment volume misses the operational value quality programs can deliver. Fourth, governance structure must distribute ownership. A single “quality lead” carrying all measurement responsibility burns out. Rotating quality champions per clinical service area (dermatology, injectables, wellness) embeds accountability across teams.

The UK’s Healthcare Quality Improvement Partnership recommends capping quality program administrative overhead at 8-10% of total clinical time. Beyond that threshold, staff perceive programs as bureaucratic rather than beneficial. That constraint forces prioritisation. A GP practice can’t measure 47 indicators. It can rigorously track 6-8 high-impact metrics that directly inform clinical decisions and patient communication.

Staff Engagement: The 60% Factor

Research from the Joint Commission and Institute for Healthcare Improvement consistently shows that staff engagement accounts for 60-70% of quality program success variance. Not training investment, not technology sophistication, not executive sponsorship. Whether frontline clinicians and support staff actively participate determines outcomes more than any other single variable.

Engagement follows a predictable pattern. High-performing clinics involve staff in metric selection from the start. A cosmetic surgery practice developing post-operative complication tracking asks nurses which data points they already notice informally – wound healing issues, patient anxiety levels, pain management adequacy. Formalising what staff already observe feels like validation, not imposition. Mandating metrics staff don’t understand or value breeds resentment.

Training must shift from “here’s what to measure” to “here’s why this measurement helps you”. A mental health clinic introducing standardised assessment tools succeeds when therapists see how scoring frameworks reduce diagnostic uncertainty in complex cases. It fails when presented as compliance checkbox. The same tool, different framing, opposite staff response. Team management platforms that surface quality metrics alongside performance feedback help staff connect measurement to professional development rather than surveillance.

Feedback loops determine whether engagement sustains. Staff who submit incident reports or quality concerns need visible responses within 7-10 days. That doesn’t mean every suggestion gets implemented, but acknowledgment and explanation must be consistent. A dermatology clinic that collects safety observations but never shares aggregated findings or corrective actions teaches staff that reporting is performative. Engagement collapses.

Measuring What Patients Actually Value

Patient-centred quality metrics outperform purely clinical indicators in predicting long-term practice success. A plastic surgery clinic can have impeccable complication rates but struggle with retention if post-operative communication is poor. Patients don’t always distinguish between clinical skill and service experience, and their perception of quality drives referral behaviour more than technical outcomes alone.

Effective patient-centred measurement focuses on three domains. First, access and timeliness – not just appointment availability, but whether clinics deliver on stated wait times and communicate delays proactively. A fertility clinic quoting 3-week consultation windows but consistently running 5 weeks erodes trust regardless of clinical quality. Second, care coordination – whether patients experience handoffs between practitioners, departments, or appointment types as smooth or disjointed. Multi-location practices face higher coordination risk, making this a priority quality domain.

Third, shared decision-making – the extent to which patients feel informed about treatment options and involved in care planning. This metric correlates strongly with treatment adherence and satisfaction but requires structured measurement. A simple three-question post-consultation survey (Did you understand your options? Did you feel your preferences were heard? Do you know what happens next?) captures decision-making quality without adding significant admin burden.

NHS England’s patient experience framework emphasises that satisfaction scores alone miss critical quality signals. A patient might rate their consultation highly while experiencing unsafe care practices they lack the clinical knowledge to identify. Combining patient-reported metrics with clinical safety indicators creates a fuller quality picture. Patient portals that allow post-visit feedback submission increase response rates 30-40% compared to email-only surveys.

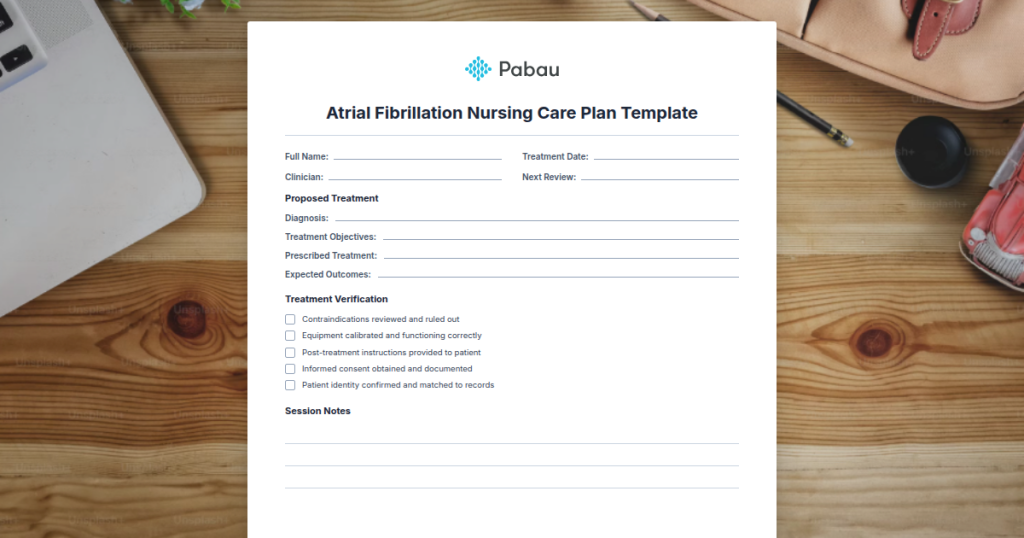

See How Pabau Supports Quality Measurement

Explore how automated workflows, integrated reporting, and compliance tracking help clinics embed quality programs into daily operations without adding administrative burden.

Technology Integration: Where Quality Programs Live or Die

Technology determines whether quality measurement becomes embedded or abandoned. A physiotherapy practice implementing outcome tracking through Excel spreadsheets will fail. The same practice using an EMR platform with built-in outcome scoring, automated follow-up prompts, and real-time dashboards will sustain the program.

The integration requirement has four components. First, data must flow from clinical documentation to quality reporting without manual transfer. When a dermatologist completes a consultation note, treatment outcomes, patient satisfaction, and safety observations should auto-populate relevant quality dashboards. Second, reporting must be real-time or near-real-time. Monthly quality reviews using 6-week-old data don’t support responsive improvement. Clinicians need to see metric trends within days of patient interactions.

Third, systems must support multi-location consolidation. A medical spa chain with 8 locations can’t aggregate quality data by manually combining site-level reports. Centralised platforms that maintain location-specific detail while rolling up to network views are non-negotiable for scaled operations. Fourth, technology must enable patient participation. Quality programs that rely entirely on internal clinical observation miss the patient perspective. Online booking systems that trigger post-visit surveys, patient portals that enable outcome tracking between visits, and automated recall systems that monitor follow-up compliance all contribute to comprehensive quality measurement.

The CQC increasingly reviews whether practices use technology to support safe care. That doesn’t mean expensive enterprise systems. It means deliberate choices about where digital tools reduce human error, improve information flow, and create audit trails. A GP practice using digital prescription management demonstrates quality infrastructure that paper-based systems can’t match.

Automation as Quality Enabler

Automated workflows reduce quality measurement burden while improving consistency. Consider medication reconciliation in a multi-practitioner clinic. Manual processes depend on clinician memory and patient reporting accuracy. Automated systems that flag drug interactions, track prescription histories, and prompt allergy confirmations at every encounter remove variation and reduce error rates by 40-60%.

The same automation principle applies to appointment management, patient communication, and follow-up protocols. Quality programs that rely on individual clinician discipline will always underperform systems where the infrastructure enforces best practices. A mental health practice implementing safety protocols for high-risk patients can’t depend on every therapist remembering to schedule 48-hour check-ins. Automated triggers that create follow-up tasks based on clinical flags remove the remembering burden.

Pro Tip

Audit your quality program’s technology dependency annually. Map each measurement task to either human judgment (necessary) or system automation (preferable). If more than 30% of routine quality data collection still requires manual work, you’re carrying unnecessary risk and burnout potential.

Financial Sustainability: Quality Programs That Pay for Themselves

Quality programs must demonstrate financial return to sustain leadership commitment during resource constraints. That doesn’t mean every metric ties directly to revenue, but the program as a whole should deliver measurable operational value. A dermatology clinic implementing comprehensive skin cancer screening protocols might not see immediate revenue increases, but reduced liability exposure, improved patient retention, and stronger referral networks provide clear financial benefits.

Four financial mechanisms support quality program sustainability. First, reduced waste and rework. A cosmetic clinic tracking complication rates and implementing corrective protocols reduces revision procedures, product waste, and practitioner time spent managing adverse events. Those efficiency gains compound quickly. Second, improved patient retention. Practices with structured quality programs show 15-25% higher patient lifetime value compared to clinics without systematic quality focus.

Third, enhanced payer relationships. Private health insurers increasingly favour practices demonstrating quality measurement and improvement capability. A physiotherapy clinic with documented outcome tracking and evidence-based protocol adherence can negotiate better rates than competitors operating on clinical judgment alone. Fourth, recruitment and retention advantages. High-quality clinical environments attract and keep skilled practitioners. Staff turnover costs typically exceed 150% of annual salary when accounting for recruitment, training, and productivity loss during vacancies.

The business case for quality must be explicit and revisited annually. A practice investing 8% of clinical time in quality activities should track where that time investment returns value. Common return categories include reduced appointment no-shows (patient engagement improves), shorter claim processing cycles (documentation quality increases), and decreased malpractice insurance premiums (safety records improve). Analytics platforms that correlate quality metrics with financial performance make the business case visible rather than assumed.

Regulatory Alignment and Accreditation Strategy

Quality programs should align with regulatory requirements and accreditation standards from inception. A UK clinic building quality infrastructure independent of CQC fundamental standards wastes effort duplicating measurement systems. Better to design quality frameworks that simultaneously serve internal improvement goals and regulatory compliance needs.

The CQC’s five key questions (safe, effective, caring, responsive, well-led) provide a structural template for quality program design. A mental health practice can map internal quality metrics to each domain – safety incident tracking (safe), evidence-based protocol adherence (effective), patient satisfaction surveys (caring), appointment access monitoring (responsive), and staff engagement measures (well-led). That alignment means regulatory inspections validate existing work rather than requiring separate compliance preparation.

Accreditation bodies like NCQA or Joint Commission offer similar frameworks. A medical spa pursuing elective accreditation can use quality program development as the foundation for certification rather than treating it as parallel work. The investment in structured quality infrastructure serves dual purposes – operational improvement and external credentialing.

Documentation standards deserve particular attention. Regulatory inspectors consistently cite poor record-keeping as a primary compliance failure. Quality programs that emphasise comprehensive clinical records, incident logging, and audit trail maintenance directly address this risk. The documentation discipline required for internal quality measurement translates seamlessly to regulatory readiness.

Expert Picks

Need structured clinical documentation guidance? Safer Clinical Notes provides a framework for maintaining audit-ready records that support both quality measurement and regulatory compliance.

Building staff training around quality standards? Understanding the CQC’s Role explains how quality frameworks align with UK regulatory expectations.

Evaluating multi-location quality coordination? Multi-Location Management explores how centralised systems maintain quality standards across distributed clinic networks.

Conclusion

Leading with quality-based healthcare programs separates clinics that sustain excellence from those cycling through improvement initiatives. The difference isn’t clinical ambition or regulatory pressure. It’s operational discipline – embedding measurement into workflow, engaging staff as quality owners, and building technology infrastructure that supports rather than burdens practitioners.

Quality programs succeed when they solve real operational problems staff face daily. They fail when treated as management theatre disconnected from clinical reality. The frameworks that endure prioritise sustainability over comprehensiveness, staff engagement over executive dashboards, and patient-centred outcomes over purely clinical metrics. That’s not softer quality measurement. It’s recognition that healthcare quality is as much about delivery systems and human factors as clinical protocols.

Frequently Asked Questions

Initial implementation typically requires 6-9 months for foundational infrastructure (metric selection, staff training, technology integration). Full program maturity takes 18-24 months as measurement becomes embedded in clinical workflow and staff engagement stabilises. Practices attempting faster rollouts see 40-50% higher abandonment rates.

Well-run programs consume 5-8% of total practice revenue when accounting for staff time, technology platforms, and training costs. This investment typically returns 12-18% improvement in operational efficiency and patient retention within two years. Practices spending below 3% struggle to sustain meaningful measurement; those exceeding 12% face diminishing returns.

Small to mid-size practices (1-3 locations) should limit active measurement to 6-10 core metrics. Larger organisations can sustain 12-15 indicators but must maintain clear metric ownership and avoid diffusion of accountability. Beyond 15 metrics, staff cognitive load increases and program effectiveness declines regardless of resources invested.

Core quality domains (safety, patient experience, clinical effectiveness) apply universally, but specific metrics must reflect specialty workflows and patient populations. A physiotherapy clinic tracks functional outcome measures; a mental health practice focuses on symptom improvement scales. The measurement framework remains consistent; the indicators adapt to clinical context.

Value-based payer contracts increasingly require documented quality measurement infrastructure. Practices with established quality programs hold 30-40% stronger negotiating positions because they can demonstrate outcome tracking capability and improvement history. The internal quality framework becomes the evidence base for contract performance requirements.